Battle-testing Temporal - Part 1

Temporal is gaining traction spearheading a new generation of Workflow tools/frameworks.

Namely Durable Execution frameworks. Unlike most Workflow tools/frameworks, Durable Execution frameworks take a code-centric approach (no external models) meant to assist the developer in building complex Workflows (Sagas) that can take anywhere from seconds to months. There is no limit.

Promising out-of-the-box support for (re)starting Workflows from exactly where they were (upon failure) based on managed event-sourced state management.

Said to support a wide-range of use-cases from multistep financial processes to ML-pipelines in the wild.

From a 1000-feet view it seems like a Durable Execution framework like Temporal could indeed be the first developer-friendly generation of Workflow engines to assist a wide variety of long-running processes.

So join me on this journey and let’s find out if it is truly possible to write production-grade long-running processes in a non-evasive developer-friendly manner by asking my favorite architecture question…

Will it Poker?

In more words, does the architecture withstand all the (non-)functional requirements that stem from supporting a fully-fledged server poker gaming server capable of supporting arbitrary amounts of poker tournaments, each supporting arbitrary amounts of players.

Dealing with:

-

Transferring of funds from/to player accounts

-

Coordination between multiple tables (games) part of the same tournament

-

Server initiated actions/timeouts like dealing cards and default player actions

-

Stateful behavior that needs to survive all sorts of failures

-

Usage of randomization to shuffle decks of cards

-

High traceability & observability requirements

-

Low-latency fan-out of updates to clients

-

Clients with incentive to cheat/break the system

Turning it into my go-to proof-of-concept use case.

| Disclaimer: this is not "you should build game servers like this", nor a "you should build game servers with Temporal" blog. It is merely blog series about testing if Temporal is up to the task for such complex use-case, highlighting which tradeoffs/benefits it brings. |

Skipping the plumping code to set up a workflow, I want to focus in this part 1 on the actual business logic instead.

DIY Approach - what I had to start with

The following pseudocode provides the basic generalized set-up of the code for a turn-based (Poker table) game server. While it has nothing to do with Temporal, it provides a baseline for the code before adding Temporal into the mix. Feel free to laugh at the semi-intentional naive approach ;)

synchronized void runGame(GameState state) {

while(!state.done) {

makeProgessIfPossible();

wait(playerActionTimeout); // Object.wait

if (!actionTaken) {

playForCurrentPlayer();

turnToNextPlayer();

}

this.actionTaken = false;

persistState(state);

}

}

synchronized void playerAction(Action action) {

if (legalAction(action)) {

doAction(action);

this.actionTaken = true;

turnToNextPlayer();

persistState(state);

notifyAll(); //Object.notifyAll

}

}how this patterns works

For those not familiar with plain old Java object locking,this works because the Object.wait(playerActionTimeout) method yields its thread and releases its lock on the gameserver object such that another thread (of the player) may execute the playerAction within the specified timeout.

With the Object.notifyAll() call explicitly notifying waiting threads to be woken up out their waiting state.

(So that Object.wait never waits longer then needed)

the rough part

In order to properly re-start upon failure, not only does such server code require carefully self-managed persisted state management, it also requires some sort of (distributed) locking mechanism to make sure exactly one server is running/restarting the game at any given point in time.

When bringing observability, error handling, test support, versioning and transactionality into the mix; depending on the exact use-case, building such layer yourself is no small feat. I know this because I built one myself once before. Using the same use-case, as part of another architectural exploration of Message-Driven Moduliths based on Kafka. While great as a feasibility study for such architecture, I would recommend against rolling your own framework for any professional use-case.

Enter - Temporal

So, could Temporal be such framework? Doing the heavy lifting while keeping my own code simple?

And how would vanilla Java code look like when adapted to a Temporal Workflow that supports durable execution?

Directly translated to Temporal

@WorkflowMethod

void runGame(GameState state) {

while (!state.done) {

makeProgessIfPossible();

if (!Workflow.await(playerActionTimeout, () -> this.actionTaken)) {

playDefaultActionForCurrentPlayer();

turnToNextPlayer();

}

}

}

@SignalMethod

void playerAction(Action action) {

if (legalAction(action)) {

doAction(action);

this.actionTaken = true;

turnToNextPlayer();

}

}The first thing to notice is how little actually had to change to run. The wait/notify has been replaced by the Workflow.await(timeout, conditionFunction) awaiting a specific condition.

synchronized is no longer needed because Temporal will never ever have multiple threads active in the same workflow at the same time.

Manually persisting the state is also gone, as Temporal will replay all events (signals) upon restart if needed to re-reach the same internal game state automatically.

In order to update any externally running process, some state change needs to be reported. Temporal provides 4 options for this:

-

Calling another Workflow’s

@SignalMethod(to update another workflow) -

Implementing

@QueryMethodfor fast retrieval of some current state -

Pushing state out by means of calling an

ActivityInterfacethat would store/push state to somewhere -

New since 2025: Implementing an

@UpdateMethodthat is able to process data and send back a result, just like the primary workflow method

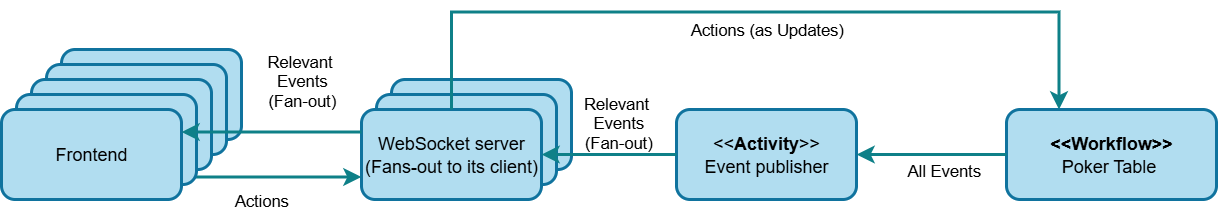

While the latest one is obviously very powerful, I felt it would introduce a lot of changes to the Workflow as well my envisioned architecture. Which at this point is assumed to look like something this:

Specifically sending Actions to the workflow and fanning out results from different components.

Therefore, I opted to stay close to the baseline code and keep sending out state changes as events by using an Activity from anywhere in the code that had a meaningful change to covey to the clients. Not caring too much about latency for now. Properly battle-testing high-frequency use of Activities in the process.

Why the above code-pattern is not correct anymore

The direct translation from the object lock-based approach to a Workflow introduces one small but vital difference in the execution ordering of the code!

In Temporal, Activities are recorded in history first and then scheduled for execution asynchronously.

As a result of this, calling an activity (even a void method) triggers a yield of the thread/process that currently executing the workflow method/signal handler that called it.

Meaning its flow is potentially interleaved with (parts of) one or more other @SignalMethod/@WorkflowMethod methods before continuing from where it called the activity.

@WorkflowMethod

void runGame(GameState state) {

someActivity.doSomething(); // activities yield just like Workflow.await...

// <-- other workflow code may execute in between!

somethingElse();

// <-- no other workflow code can run in between

somethingElse();

}Coming from a baseline that did not expect any interleaving except on explicit calls to Object.wait (now Workflow.await) calls, this causes subtle issues resulting from out-of-order program flow.

Idiomatic Temporal workflow pattern

Because of the plethora of issues that may arise from executing complex logic from multiple entrypoints, the general advice seems to keep @SignalMethod methods dumb.

Instead of fully processing a signal itself, storing any incoming work as a result of a signal in some form of queue.

Leaving it up to the sole @WorkflowMethod to actually process.

(To me this made quite a bit of sense, which is another reason not to dive into @UpdateMethod based state sharing approach for now.)

When applied to the example above, this translates to:

@WorkflowMethod

void runGame(GameState state) {

while(!state.done) {

makeProgessIfPossible();

Action action;

if (Workflow.await(playerActionTimeout, () -> this.action != null)) {

action = this.action;

} else {

action = defaultActionForCurrentPlayer();

}

doAndClearAction(action); // sets state.action back to null

}

}

@SignalMethod

void playerAction(Action action) {

if (this.action == null && legalAction(action)) {

this.action = action;

}

}Not only does this solve the immediate problems with initial naive translated design, it actually deduplicates logic and significantly lowers the cognitive load of dealing with competing sub-processes mutating state and causing side-effects.

You could say, Temporal forced me into a better design.

Note that I didn’t use an actual queue in this example, but instead opted to ignore any additional incoming action before the current one was processed. I mean, you can’t bravely declare CALL then followed by a sneaky fold and expect the fold to count.

Idiomatic Temporal input processing

While not selecting @UpdateMethod to covey state back to clients, it does seem to be a better fit then a @SignalMethod when it comes to processing end-user input. With specific support for light-weight validation and feedback, before recording only valid signals into history. Making it easier to read later.

Revisiting:

@SignalMethod

void playerAction(Action action) {

if (this.action == null && legalAction(action)) {

this.action = action;

}

}into something like this:

@UpdateValidatorMethod(updateName = "playerAction")

void playerAction(Action action) {

if (this.action != null) {

throw new IllegalArgumentException("action already set...");

}

if (!legalAction(action)) {

throw new IllegalArgumentException("illegal action...");

}

}

@UpdateMethod

void playerAction(Action action) {

if (this.action != null && legalAction(action)) {

this.action = action;

} else {

log.warn("action {} no longer valid, state: {}", action, state);

}

}Note that I didn’t actually remove the validations from the @UpdateMethod, as the execution of these methods can again be interleaved with any workflow processes. This does however add two things:

-

feedback to clients in the form of exceptions

-

prevents recording and showing typical bogus actions in the event log

Workflows, determinism and side effects

In a programming model where signals can be replayed to reproduce the state to a certain previous state, two things are problematic:

-

methods that introduce side effects by making changes outside the workflow,

-

methods that produce different results each time they are used.

Dealing with side effects

To prevent re-creating any side effects upon replay, all code that triggers side effects must be explicitly

run as an Activity.

With an Activity also just being code, but behind an interface marked as @ActivityInterface.

(Depending on configuration though, the activity code may run remotely on a completely different/dedicated microservice.)

The idea behind this is simple. Upon replay, all events will be replayed but all activities will not.

Preventing unwanted duplication of side effects.

Instead, any outcome of an activity will be directly shared back to the workflow by the framework, serving it from its persisted history.

So if someActivity.doSomething() returned "foo" the first time, it will also return "foo" to the workflow during replay, but its method body will never actually run again.

Note that like most things in a distributed persisted environment Temporal guarantees at least once delivery. Therefore, Workflow, Signal, Update and Activity methods must still deal with being triggered multiple times in edge cases like partial failures, possibly needing idempotency checks to prevent duplicate processing.

Dealing with different results

In order to support methods that produce different results each time the Temporal frameworks Workflow class comes with build-in replacements that record their calls and results in history.

Apart from the structural changes in the baseline code, I also had to replace these sort of functions with their Temporal counterparts:

Workflow.randomUUID()

Workflow.newRandom()

Workflow.currentTimeMillis()

Embracing Event-Sourcing

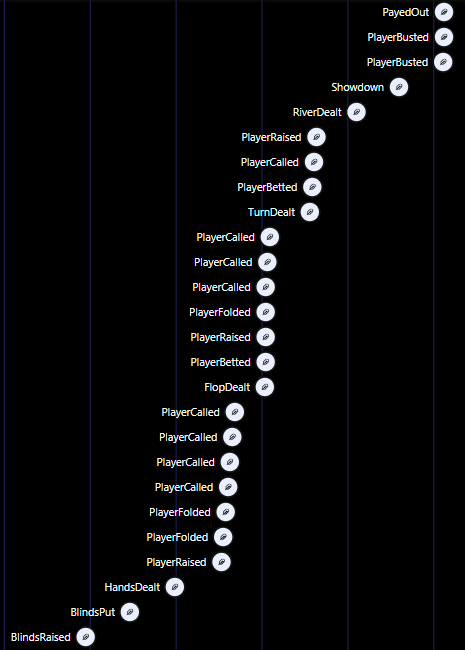

Another adaption from the original baseline code, that was based on persisting its entire state through 1 generic event, is going all-in on the event-sourced nature of Temporal defining small and specific signals and activities. With the framework persisting everything but the actual state itself.

Not only is it more idiomatic EDA, using specific signals and activities to send back events for everything, it helps a lot in leveraging the out-of-the-box observability to gain insights!

@JsonTypeInfo(use = JsonTypeInfo.Id.NAME, property = "type")

@JsonSubTypes({

@Type(value = PlayerFolded.class, name = "PlayerFolded"),

@Type(value = PlayerBetted.class, name = "PlayerBetted"),

@Type(value = PlayerChecked.class, name = "PlayerChecked"),

@Type(value = PlayerRaised.class, name = "PlayerRaised"),

...

})

sealed public interface TableEvent permits

PlayerFolded, PlayerBetted, PlayerChecked, PlayerRaised, ... {

UUID tournamentId();

int tableId();

long eventId();

Date occurredAt();

}With each concrete record containing at least these four fields, but in addition anything else specific to its event.

All used as separate activities and update methods:

@ActivityInterface

public interface TableEventPublisher {

void playerFolded(PlayerFolded playerFolded);

void playerChecked(PlayerChecked playerChecked);

void playerBetted(PlayerBetted playerBetted);

...

}

@WorkflowInterface

public interface TableWorkflow {

@UpdateMethod void onFold(Fold fold);

@UpdateMethod void onCheck(Check check);

@UpdateMethod void onBet(Bet bet);

...

@UpdateValidatorMethod(updateName = "onFold") void validateFold(Fold fold);

@UpdateValidatorMethod(updateName = "onCheck") void validateCheck(Check check);

@UpdateValidatorMethod(updateName = "onBet") void validateBet(Bet bet);

...

}When running the actual game, looking at the build-in dashboard it creates a nice overview updated real-time as the game goes on. (Filtering on Activities for brevity)

Learnings so far

-

Development of a long-running stateful process feels quite natural, without any particular invasive behavior or altering the general devx of typical software development. Which is quite unlike other workflow frameworks that tent to be based on (BPMN-)model-first based approaches.

-

Signal handlers must be implemented in a very specific way such that they queue their signal to not introduce subtle bugs (more on this in next part). With the framework not shielding the user from implementing code that seemingly works but isn’t truly correct. I guess this is the exact other edge of the sword when it comes to full freedom to model the workflow directly in code (wrongly).

-

Overall Temporal sets up a nice environment for workflows to run in, completely restartable, observable and deterministic based on event-sourcing.

-

Choosing how to share the workflow state to clients seems to have a high impact on the overall code/flow and should not be considered an afterthought.

Next up…

Stay tuned for getting down and dirty with hardening the workflow for recreation from state, continuation & communication.